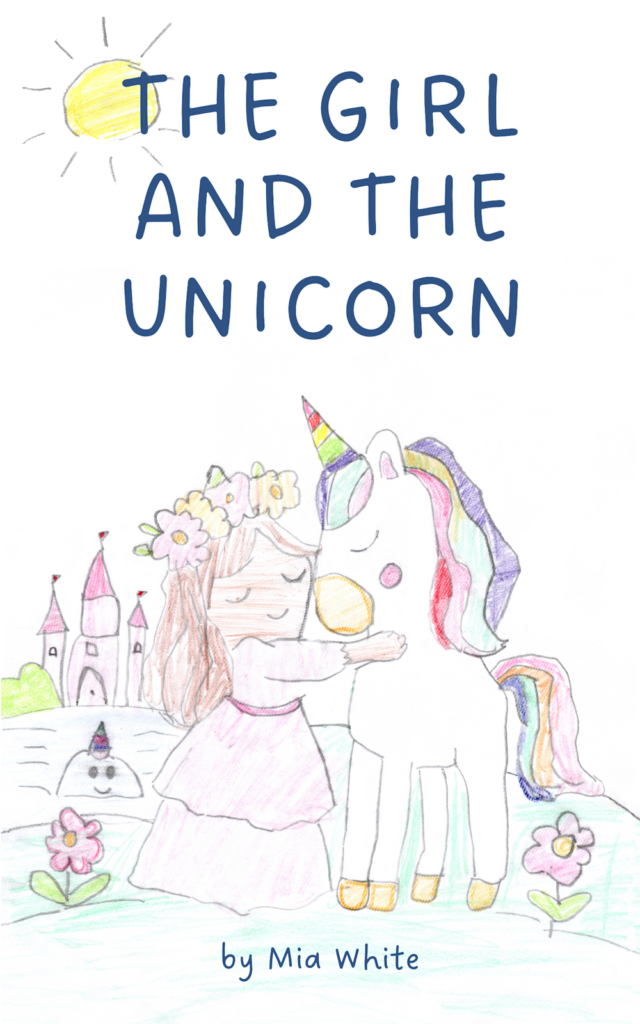

Shortly after my daughter turned six, we decided to work on a chapter book together. It’s called The Girl and the Unicorn.

When I was a young child living in New York, we used to visit my grandparents in Forest Hills almost every weekend. My grandmother, before she had been a mother, had been a reading teacher, and one of the things we used to do together during visits was that she would encourage me to tell her stories while she typed on an old-fashioned typewriter.

I remember narrating what felt like super long, detailed stories, and then, at the end, she would pull the lever and remove the paper, and it was a mere fraction of a page. The mind of a young child is a funny thing.

My wife and I read to our children every night, and I had shared these core memories with my daughter. I told her that whenever she wanted to write a story, I could type it out for her as my grandmother did for me. And since most of the stories she reads still have at least a few pictures, she asked if we could print it out after so she could illustrate it.

Not long after, my daughter and I took my son to his karate belt testing. I brought the usual bag with the coloring books, sticker books, workbooks, crayons, and other accoutrements, as I often do, but I also slipped in my AlphaSmart Neo 2, one of those dedicated LCD word processors that people of my generation used in middle school for in-class writing projects (and are now rare and comically expensive).

The screen shows a handful of lines of text. It has a full-size keyboard and nothing else. It is a single-use item and almost feels analog despite being digital.

While he and the other students were practicing before the event, I asked if she wanted to start writing her story, and she enthusiastically agreed. So I pulled out the keyboard, and she began.

We had discussed what she wanted to write about before, and she had settled on a title of The Girl and the Unicorn. We’d arrived at that after I’d asked her some months back what story she wished existed and wanted to read, and that’s what she’d told me.

It’s amazing to see a young, creative, unfettered mind, because she dictated fluently and quickly. Our first session produced a couple of chapters. Another chapter was written in a Starbucks. And the rest were written on our couch or reclining side by side in her bed in the evening.

The hardest part by far was keeping up with her speed.

Biased as I may be, I thought/think the story was adorable. My prompting for additional information was very limited, and my role as editor was primarily a matter of proofreading and light copyediting.

As anyone who has spent time with children can attest, their knowledge of grammar is, to put it euphemistically, imperfect. She also had a habit of starting most sentences with “then” and “after,” so a small fraction of those were removed for clarity and repetition. In some cases, I split run-on sentences up into smaller ones. Occasionally, I added a conjunction like “and” to help with pacing/variety. Otherwise, I didn’t change a thing.

I wanted her to understand the idea of drafting and revising. So after we finished the first draft—and even as we went through some of the chapters—I would read her what she had written and ask her if there were changes she wanted to make. Sometimes she noted repetition that she wanted to remove or details she wanted to add.

Once that second draft was done, I printed it out as a booklet and stapled it so I could read it to her start to finish, and we did some line editing together. I made marks with my pen to show her places where we needed to fix punctuation or when certain words were incorrect (mostly over her head to be sure), but we also made notes about things she needed to change, like continuity errors. For example, there were times when a character who was elsewhere suddenly appeared or was taking a nap but acting as though they were awake.

She told me how she wanted to fix them, and we made the changes. A couple of chapters were disproportionately shorter than others, so she added some content.

With the text complete at ~4000 words over eight chapters, we went through the story and made a list of all the drawings she wanted to make in order to illustrate it: the girl, the unicorn, the castle, various other characters, scenery, settings, and a bunch of food (which she wanted to use as endpapers in the print edition). Over the coming months, she slowly added to her collection of illustrations (they weren’t made in book order, but you can still see how her drawing skills evolved over the course of the project).

Before we’d even started—she’d asked if it could be a “real” book, to which I’d said of course.

I’d also asked her if she wanted the story to be on my site, like most of my writing, so that anybody could read it, or if she wanted it just to be something somebody could buy on Amazon. And she was insistent that she wanted it to be available on the site itself, as well as something that we could print and give to her teachers and friends.

And so, with the text finished and the pictures complete (she did the cover illustration last), I scanned in all the images and laid out the book for publication.

These days, when you make your own print-on-demand book for Amazon and want to order a proof before officially releasing it for publication and purchase, they put a “Not for Resale” banner over the front cover. The last time I released a book was in 2019, and they put a disclaimer on the inside.

The banner threw off her first experience holding her book just a smidge, but she was still over the moon. I’m not one to take photos of everything, but I really wish I had been able to capture her face at that moment and keep it forever.

The proof gave us one final chance to see how everything came out on paper and allowed us to confirm that she was happy with how the cover came out and the size and spacing of the illustrations.

With that final step complete, I made a couple of tweaks and told Amazon we were ready. With the click of a few buttons and a wait of just a few hours, it was live. Amazon print-on-demand is expensive per unit copy, but making something and putting it out there is easier than ever.

I then ordered a bunch of copies for her to give to her friends, family, and teachers.

She dedicated the book to young readers like herself, which I think is precious.

When she was dictating, some lines were just so innocent and tender that, as her father, I melted. I think it’s a legitimately enjoyable story, especially judged relative to the typical early reader fare. I think other young children would enjoy it, and it would make great inspiration fodder for other kids.

She is a wonderful, sweet, and funny girl, and I was so glad to be able to make this for her and now share it with you. To her delight, The Girl and the Unicorn is available in print, ebook, and on this site.

At the end of the day, it’s hard to know what kind of parent you are and which things you do as a parent your kids will remember when they’re older. It’s impossible to know which memories will be formative and which will fade into the ether as mere wisps of experience, at most weaving the background fabric of the person they will become.

But this was special and worth it, even just for me.